- •Contributors

- •Table of Contents

- •Preface

- •Technical requirements

- •Installing a Power BI gateway

- •Getting ready

- •How it works

- •Authentication to data sources

- •Getting ready

- •How it works

- •Main challenges that Power Query solves

- •Getting ready

- •Technical requirements

- •Getting data and connector navigation

- •Getting ready

- •Creating a query from files

- •Getting ready

- •How it works...

- •Creating a query from a folder

- •Getting ready

- •How it works...

- •Creating a query from a database

- •Getting ready

- •How it works...

- •Creating a query from a website

- •Getting ready

- •How it works...

- •Technical requirements

- •Exploring Power Query Editor

- •Getting ready

- •Managing columns

- •Getting ready

- •Using data profiling tools

- •Getting ready

- •Using Queries pane shortcuts

- •Getting ready

- •Using Query Settings pane shortcuts

- •Getting ready

- •Using Schema view and Diagram view

- •Getting ready

- •Technical requirements

- •Formatting data types

- •Getting ready

- •Using first rows as headers

- •Getting ready

- •Grouping data

- •Getting ready

- •Unpivoting and pivoting columns

- •Getting ready

- •Filling empty rows

- •Getting ready

- •Splitting columns

- •Getting ready

- •Extracting data

- •Getting ready

- •Parsing JSON or XML

- •Getting ready

- •Exploring artificial intelligence insights

- •Getting ready

- •Technical requirements

- •Merging queries

- •Getting ready

- •Joining methods

- •Getting ready

- •Appending queries

- •Getting ready

- •Combining multiple files

- •Getting ready

- •Using the Query Dependencies view

- •Getting ready

- •Technical requirements

- •Setting up parameters

- •Getting ready

- •Filtering with parameters

- •Getting ready

- •Folding queries

- •Getting ready

- •Leveraging incremental refresh and folding

- •Getting ready

- •Disabling query load

- •Getting ready

- •Technical requirements

- •Using M syntax and the Advanced Editor

- •Getting ready

- •Using M and DAX – differences

- •Getting ready

- •Using M on existing queries

- •Getting ready

- •Writing queries with M

- •Getting ready

- •Creating tables in M

- •Getting ready

- •Leveraging M – tips and tricks

- •Getting ready

- •Technical requirements

- •Adding columns from examples

- •Getting ready

- •Adding conditional columns

- •Getting ready

- •Adding custom columns

- •Getting ready

- •Invoking custom functions

- •Getting ready

- •Clustering values

- •Getting ready

- •Technical requirements

- •Using Power BI dataflows

- •Getting ready

- •Centralizing ETL with dataflows

- •Getting ready

- •Building dataflows with Power BI Premium capabilities

- •Getting ready

- •Understanding dataflow best practices

- •Getting ready

- •Technical requirements

- •Exploring diagnostics options

- •Getting ready

- •Managing a diagnostics session

- •Getting ready

- •Designing a report with diagnostics results

- •Getting ready

- •There's more…

- •Using Diagnose as a Power Query step

- •Getting ready

- •Other Books You May Enjoy

- •Index

82 Data Exploration in Power Query

Remember that you can always go back and modify the Remove Columns step in the Apply Steps pane. In fact, Choose Columns decides by itself whether selecting or removing (other) columns is the most efficient action.

Using data profiling tools

You may deal with great amounts of data and need tools that allow you to quickly check data quality and distribution and get insights from columns' profiles.

Power Query offers an intuitive way of exploring data to identify bad data. Data profiling is especially convenient when you are working with large volumes of data and you want to quickly visualize the composition of that data.

Getting ready

For this recipe, you need to download the FactInternetSales2 CSV file into your local folder.

In this example, we will refer to the C:\Data folder.

How to do it…

Once you have opened your Power BI Desktop application, you are ready to perform the following steps:

1.Click on Get Data and select the Text/CSV connector.

2.Browse to your local folder where you downloaded the FactInternetSales2 CSV file and open it. A window with a preview of the data will pop up; click on

Transform data.

3.Browse the query ribbon and click on View:

Figure 3.17 – View tab

Using data profiling tools 83

4. Flag Column quality and observe the results:

Figure 3.18 – Column quality

This view shows three categorizations that define the content of the column in terms of quality, expressed in percentages:

•Valid: The percentage of valid data according to column data type

•Error: The percentage of rows with errors

•Empty: The percentage of empty rows

This information is based on the top 1000 rows, as you can observe at the bottom of the Power Query UI:

Figure 3.19 – Profiling based on the top 1000 rows

84Data Exploration in Power Query

5.Click on Column profiling based on top 1000 rows and select Column profiling based on entire data set:

Figure 3.20 – Profiling based on entire dataset

6. Now, observe the new values under the column names:

Figure 3.21 – Column quality on entire dataset

You will see different values from the ones based on the preview data. It is always important to check and not rely exclusively on the data quality profiled on the top 1000 rows.

7.Next, using your cursor, hover under the ProductKey column and see that a tooltip will appear:

Figure 3.22 – Tooltip on column quality

Using data profiling tools 85

You can see that there is a value that is compromising the column quality. In order to manage this error, we have to perform a set of actions. You could directly click on Remove Errors and remove the row affected by that error, but in this way, you could lose relevant information.

8. Click on the three dots (…) and click on Keep Errors:

Figure 3.23 – Keep Errors

9. In this way, you will be able to filter to see the rows affected by errors:

Figure 3.24 – Rows affected by errors

86Data Exploration in Power Query

10.Click on the value contained in the ProductKey column, and you will see the value that is causing the error:

Figure 3.25 – Error in data quality

You will see that the error was caused by a numeric value contaminated by a letter, and this error comes from the data source. It is possible to fix this at the Power Query level without changing the value on the data source, but by having a correct value for reporting purposes.

11.In order to fix this value, you can go back to the previous view with all data by deleting the Kept Errors and ProductKey steps from the APPLIED STEPS pane:

Figure 3.26 – Deleting applied steps

12. Select the ProductKey column, right-click on it, and click on Replace Errors…:

Figure 3.27 – Replace Errors

Using data profiling tools 87

13.Enter the value 480 in order to replace the error that we know to be 480b, as retrieved from previous steps:

Figure 3.28 – Replace Errors window

14.After this replacement is applied, you can see that now, from a data quality point of view, the column has no errors:

Figure 3.29 – Column quality

Next, we will focus on the Column distribution feature that provides additional information to the Column quality feature.

In order to see how this tool works, follow the next steps:

1. Browse the query ribbon, click on View, and flag Column distribution:

Figure 3.30 – Column distribution flagged

88Data Exploration in Power Query

2.You will see a section that shows a number of distinct and unique values in a column and a visualization showing the distribution of these values:

Figure 3.31 – Column distribution section

3.If you hover with your cursor on the section we are considering, you can see details on how many distinct and unique values that column has:

Figure 3.32 – Column distribution details

Using data profiling tools 89

Finally, we will focus on the Column profile feature. In order to see how to leverage this tool, follow the next steps:

1. Browse the query ribbon, click on View, and flag Column profile:

Figure 3.33 – Column profile flagged

2. You will see a section appearing at the bottom of the UI, as follows:

Figure 3.34 – Column profile section

90 Data Exploration in Power Query

This section gives additional details on the columns' content. On the left, you can see data other than that seen under Column quality and Column distribution, such as NaN values, Min and Max, Average, Standard deviation, and a count of even and odd numbers.

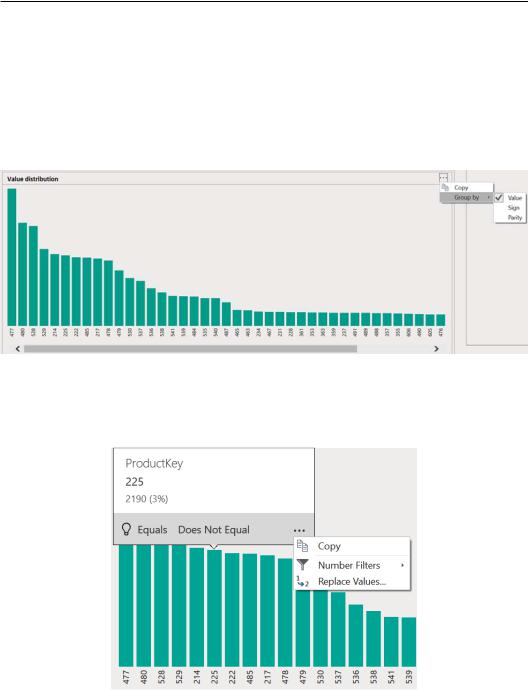

On the right, you can see a column chart with detailed values on data distribution.

3.If you click on the three dots (…), you will see that you can choose different grouping types according to the column's data type:

Figure 3.35 – Column distribution chart

4.If you hover with your cursor on the chart, a tooltip will appear with additional information on that column's values; if you click on the three dots (…), you can apply directly from here a set of transformations such as filtering data or replacing values:

Figure 3.36 – Column distribution tooltip details